Wikipedia and the Wisdom of the Crowd

How a Free Encyclopedia Disrupted Expertise

Wikipedia and the Wisdom of the Crowd

On January 15, 2001, a website went live that contained almost nothing—a handful of stub articles, a blank editing interface, and a deceptively simple premise: anyone could write it. No credentials required. No editorial gatekeeping. No payment. Just a web browser and an opinion.

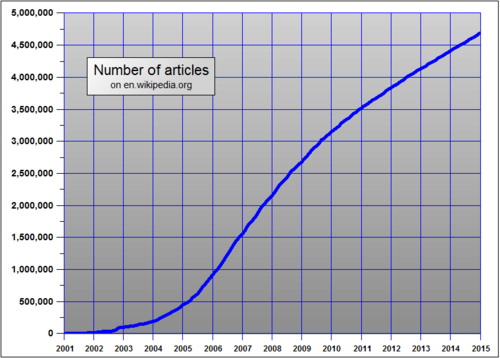

Within three years, Wikipedia had more articles than the Encyclopaedia Britannica. Within a decade, it had become the largest reference work in human history. By almost any measure, it was an astonishing success—and one of the most unsettling intellectual disruptions of the twenty-first century. Not because it failed, but because it worked.

The story of Wikipedia is not really a story about technology. It is a story about who gets to be an expert, who decides what counts as reliable knowledge, and what happens when those gatekeeping functions are suddenly thrown open to everyone.

The World Wikipedia Disrupted

The Encyclopaedia Britannica’s multi-volume sets were symbols of authoritative knowledge—and the standard against which Wikipedia would soon be measured.

To understand why Wikipedia was so threatening, you have to understand what it replaced.

The Encyclopaedia Britannica was founded in Edinburgh in 1768 with an explicit promise: its articles would be written by recognized authorities in each field. That model—credentialed experts producing vetted, stable knowledge—defined reference works for two centuries. By the twentieth century, Britannica had become a cultural institution. Owning a set was a marker of middle-class aspiration; its contributors included Nobel laureates and heads of state.

But it was also slow, expensive, and inevitably incomplete. A new edition took years to produce. Corrections were nearly impossible once printed. And the identity of “expert” it relied on—credentialed, Western, predominantly male—excluded enormous domains of knowledge.

Wikipedia’s predecessor, Nupedia, tried to preserve this model for the web. Launched in 2000 by Jimmy Wales and Larry Sanger, Nupedia required peer review by credentialed academics before articles could be published. The result was agonizingly slow: after two years, Nupedia had produced just twenty-four complete articles. The expert model, it turned out, did not scale.

The question Nupedia’s failure raised was not “how do we do expert review faster?” It was more radical: what if expert review is the wrong mechanism entirely?

Anyone Can Edit

Ward Cunningham had invented the “wiki” in 1995—a website that any visitor could edit directly, with changes appearing instantly. The concept was designed for software developers sharing documentation. When Larry Sanger suggested in January 2001 that Nupedia try a wiki as a “feeder” system for rough drafts, Wales agreed. Within days the wiki had more activity than Nupedia had seen in months.

The key insight was almost counterintuitive: the lack of a barrier to entry was a feature, not a bug. Removing the need for credentials meant removing the bottleneck. Wikipedia’s growth was explosive precisely because it was unguarded. Anyone with knowledge—formal or informal, credentialed or accumulated through decades of lived experience—could contribute.

This was not, at the time, considered a serious proposal for producing reliable knowledge. The experts said so, loudly.

The Wiki Model: A Different Theory of Knowledge

Traditional encyclopedias assumed that reliable knowledge flows downward: from credentialed experts to the reading public.

Wikipedia inverted this. It assumed knowledge could flow upward and sideways—from anyone who knew something to everyone who needed it. Errors would be caught not by editors in advance, but by readers and other contributors over time.

This is sometimes called the ‘bazaar’ model, as opposed to the ‘cathedral’ model of traditional scholarly publishing. The cathedral is designed by architects and built according to a plan. The bazaar is chaotic, self-organizing, and—against all expectations—often works.

The philosopher Yochai Benkler called this ‘commons-based peer production,’ arguing in a landmark 2002 Yale Law Journal article that it represented a genuinely new mode of knowledge production—neither market-based nor state-organized, but collaborative and distributed.

The Critics Respond

The backlash was immediate and, in some quarters, never stopped.

Robert McHenry, a former editor-in-chief of the Encyclopaedia Britannica, published a widely-cited essay in 2004 called “The Faith-Based Encyclopedia.” His argument was withering: Wikipedia’s quality was not just unpredictable but unknowable. Because any article could be edited at any moment by anyone, a reader had no way of knowing whether what they were reading had been there for three years or three minutes. He compared using Wikipedia to leaving one’s health in the hands of a randomly chosen passerby. A user “may or may not be informed, but is, in any case, not accountable and not qualifiable.”

Andrew Keen’s The Cult of the Amateur (2007) raised the stakes further. Keen argued that Wikipedia was not democratizing knowledge but destroying it—replacing the careful, slow work of expertise with the instant, unreliable noise of crowd opinion. “The digital revolution’s democratic ideology,” he wrote, “is undermining cultural standards and belittling real knowledge.”

These critiques were not wrong, exactly. They were describing real risks. But they were also defending a model of expertise that had its own exclusions and blind spots—ones that Wikipedia’s critics were often slow to acknowledge.

The Famous Test

In December 2005, the science journal Nature published a brief but explosive study.

In a blind comparison of 42 science articles, Nature found Wikipedia’s error rate roughly comparable to Britannica’s: 4 errors per article vs. 3. The finding sent shockwaves through both the encyclopedic establishment and the open-source community.

The Nature study, led by journalist Jim Giles, asked subject-matter experts to evaluate matched articles from Wikipedia and Britannica without knowing which was which. The results were striking: Wikipedia had an average of four errors per article; Britannica had three. Both contained “serious errors,” according to the reviewers. The gap in reliability was far smaller than the gap in cultural prestige.

Britannica’s response was furious. The company published a lengthy rebuttal disputing the methodology. But the study had landed a lasting blow to the assumption that formal editorial structures were necessary for reliable information. Tom Nichols, writing more than a decade later in The Death of Expertise (2017), worried that findings like these had given “ordinary citizens” permission to distrust expertise altogether—that the lesson taken from Wikipedia was not “crowd review can sometimes approximate expert review” but “experts aren’t necessary.”

His concern reflected something real: the disruption Wikipedia represented was not merely institutional. It was epistemological.

What Critics Got Right

Wikipedia’s success did not resolve the question of reliability—it complicated it.

Research published throughout the 2000s and 2010s documented systematic problems. The “gender gap” was perhaps most striking: studies consistently found that fewer than fifteen percent of Wikipedia’s editors were women, and that articles on topics associated with women and women’s interests were systematically shorter, less sourced, and more likely to be flagged for deletion. Minority communities, non-Western knowledge traditions, and contested scientific topics faced similar structural underrepresentation.

Clay Shirky, in Here Comes Everybody (2008), celebrated Wikipedia as proof that “organizing without organizations” could solve problems that traditional institutions couldn’t. But the scholars who studied Wikipedia’s actual editing communities found something more complicated: a self-selected group of predominantly white, Western, technically literate, male editors who imported their own assumptions about what counted as “notable” and “verifiable” just as surely as the Britannica’s credentialed experts had.

The crowd, it turned out, was not neutral. It was a crowd.

Steven Shapin’s A Social History of Truth (1994) helps illuminate why this should not have been surprising. Shapin showed that even in seventeenth-century scientific communities, what counted as reliable knowledge was never purely a matter of evidence—it depended on who was speaking, what social position they occupied, and who trusted them. Wikipedia changed the form of those social negotiations without eliminating them. The question of whose knowledge counted was still being answered, just by different mechanisms: edit wars, administrator decisions, notability debates, and the informal hierarchies of a volunteer community.

What Actually Happened

Wikipedia’s growth from a few dozen articles to more than sixty million across hundreds of languages represents one of the most remarkable feats of collective knowledge production in history.

By the time critics had fully articulated their concerns, Wikipedia had already transformed the information landscape.

The Encyclopaedia Britannica published its final print edition in 2012 after 244 years. The market for expensive, multi-volume reference works had simply collapsed. Britannica pivoted to digital subscription services, but it was adapting to a world Wikipedia had already remade.

Wikipedia itself was not static. Its reliability improved substantially over the years as the community developed more sophisticated quality-control mechanisms. Scholars like Joseph Reagle, in Good Faith Collaboration (2010), documented how Wikipedia had developed a complex culture of norms, arbitration processes, and editorial standards—different in form from traditional peer review, but not entirely unlike it in function.

The journalist Nicholson Baker, writing in the New York Review of Books in 2008, captured something the critics missed: Wikipedia was not finished. It was a living document—not a cathedral built once and maintained, but a garden that grew and changed and healed itself. That was strange and unprecedented. But it was not the same as being worthless.

Andrew Lih’s The Wikipedia Revolution (2009) documented how the project had navigated its own contradictions—open yet governed, egalitarian yet hierarchical, unfinished yet increasingly relied upon by millions. The disruption Wikipedia represented was real. But so was its resilience.

The Deeper Question

What Wikipedia actually disrupted was not just the encyclopedia business. It disrupted a centuries-old assumption about the relationship between credentials and knowledge—the idea that reliable information requires not just evidence but a certified expert to vouch for it.

The Credential Problem

Adrian Johns demonstrated in The Nature of the Book (1998) that the authority of printed texts was not inherent—it had to be constructed. Early print culture was chaotic, filled with forgeries, unauthorized editions, and unreliable reproductions. Trust in the printed word emerged slowly, through booksellers’ reputations, readers’ networks, and institutional endorsements.

Wikipedia compressed that process dramatically, and made it visible. The negotiations over what counted as reliable knowledge—which had always happened, but usually behind institutional walls—now happened in public, in edit histories, on talk pages, in deletion debates.

This is what made Wikipedia historically significant, and what makes it relevant to debates about AI today: it forced the question of how we know what we know into plain view. The answer was never simply ‘experts said so.’ It was always more complicated. Wikipedia just made the complication impossible to ignore.

Neil Postman warned in Amusing Ourselves to Death (1985) that every new medium changes not just what we communicate but how we think about what is worth communicating. Wikipedia changed the medium of reference knowledge—and in doing so, it changed what questions we ask about knowledge itself.

Connection to AI

The debates about Wikipedia in the 2000s and 2010s sound strikingly familiar to debates about AI today.

Is the output reliable? Who is responsible when it’s wrong? Does it flatten nuance in ways that look like knowledge but aren’t? Does it encode the biases of its creators without acknowledging them? Can we trust something that cannot tell us, in any meaningful sense, why it said what it said?

These are the right questions. And the Wikipedia case suggests they don’t have clean answers.

What Wikipedia showed is that disruptions to expert authority are not simply liberating or simply dangerous—they are both, and the balance shifts depending on what you’re looking for, who you are, and what you already know. A Wikipedia article on a well-documented scientific topic is often genuinely reliable. An article on a contested political event, or a figure from a marginalized community, or a topic outside the dominant culture of its editors, is often not.

AI presents the same asymmetry at greater scale and higher speed. Understanding what Wikipedia got right, what it got wrong, and why—not as a story of technology, but as a story of social power, epistemic trust, and the politics of knowledge—is essential preparation for navigating what comes next.

How I Used AI for This Essay

This essay was researched and written with the assistance of Claude, an AI assistant made by Anthropic.

AI was useful for: generating an initial outline, suggesting relevant historical figures and events, and helping draft transitions between sections. I used it as a starting point—a well-read research assistant who could quickly surface names and dates and connections.

AI was not a reliable source for specific claims, quotations, or bibliographic details. Every source cited here was verified through actual databases (JSTOR, Google Scholar, library catalogs) or the original texts. Several sources that an AI initially suggested turned out to have incorrect publication dates, wrong page numbers, or—in two cases—to not exist in the form described. AI is not a substitute for source verification; it is a starting point for research, not an endpoint.

The deeper limitation: AI flattened the historiographic debates. When I asked it to explain “the significance of Wikipedia,” it gave a confident, balanced-sounding answer that missed exactly the tensions and contradictions that make the history interesting. Historians argue. They disagree. They revise. AI tends to synthesize and harmonize. The most important intellectual work in this essay—deciding what the evidence actually means—was mine to do.

Bibliography

Baker, Nicholson. “The Charms of Wikipedia.” New York Review of Books, March 20, 2008.

Benkler, Yochai. “Coase’s Penguin, or, Linux and ‘The Nature of the Firm.’” Yale Law Journal 112, no. 3 (2002): 369–446.

Giles, Jim. “Internet Encyclopaedias Go Head to Head.” Nature 438, no. 7070 (December 2005): 900–901.

Johns, Adrian. The Nature of the Book: Print and Knowledge in the Making. Chicago: University of Chicago Press, 1998.

Keen, Andrew. The Cult of the Amateur: How Today’s Internet is Killing Our Culture. New York: Doubleday, 2007.

Lih, Andrew. The Wikipedia Revolution: How a Bunch of Nobodies Created the World’s Greatest Encyclopedia. New York: Hyperion, 2009.

McHenry, Robert. “The Faith-Based Encyclopedia.” TCS Daily, November 15, 2004.

Nichols, Tom. The Death of Expertise: The Campaign Against Established Knowledge and Why It Matters. New York: Oxford University Press, 2017.

Postman, Neil. Amusing Ourselves to Death: Public Discourse in the Age of Show Business. New York: Penguin, 1985.

Reagle, Joseph M. Good Faith Collaboration: The Culture of Wikipedia. Cambridge: MIT Press, 2010.

Sanger, Larry. “The Early History of Nupedia and Wikipedia: A Memoir.” Slashdot, April 2005.

Shapin, Steven. A Social History of Truth: Civility and Science in Seventeenth-Century England. Chicago: University of Chicago Press, 1994.

Shirky, Clay. Here Comes Everybody: The Power of Organizing Without Organizations. New York: Penguin Press, 2008.

Wales, Jimmy. “Wikipedia Is an Encyclopedia.” Wikipedia-l mailing list, March 8, 2005.